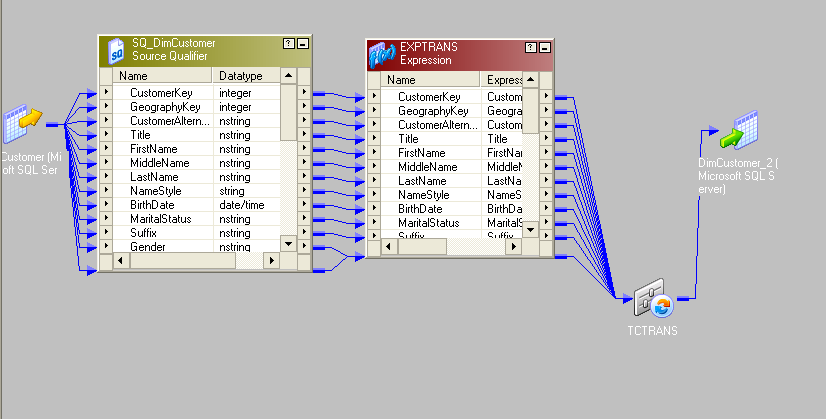

In this way, you can return to the earlier state if something goes wrong. Generally, during transformation, the data is uploaded into a staging database before being loaded into the target data source. The goal is to prepare the data for analytics and other downstream activities. Common transformations include aggregation, filtering, normalization, and more. In the transformation stage, the data is processed to ensure its structure is consistent and uniform before moving on to the final step.

The data will only proceed to the next stage if the validation is successful. During this stage, validation guidelines are applied to ensure the data meets the necessary standards. In the extraction phase, data is gathered from various sources and temporarily stored for the next steps. Data-driven companies often use it to simplify the movement of data for better accessibility of information across departments.

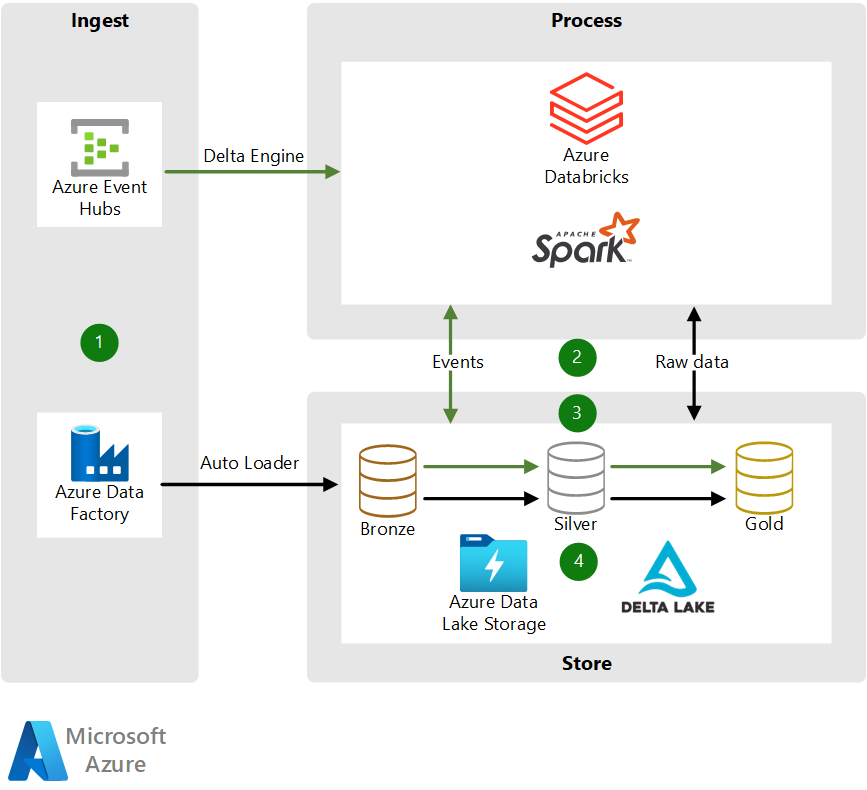

ETL streamlines the process of transferring data from one or more databases to a centralized database. What is ETL? Image SourceĮTL is a short form of Extract, Transform, and Load. Popular data warehouses such as Amazon Redshift and Google BigQuery still recommend loading events through files in batches as it provides much better performance at a much lower cost compared to direct and individual writes to the tables.īut how does it work, and where is it used? How is it different from streaming ETL processing? No worries! This article provides all the answers on ETL batch processing in a nifty 6-minute read. One of the earlier techniques that came into existence was ETL batch processing and is continued to be used in multiple use cases. To effectively harness the power of the data, it is necessary to process information through either ETL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed