The first step of data migration is data mapping in which attributes in the data source are mapped to attributes in the destination. Although it increases the storage requirements for the same data, it makes it more available and reduces the load on a single system. In most cases, it is done to ensure that multiple systems have a copy of the same data. Data Migration Image Sourceĭata migration can be defined as the movement of data from one system to another performed as a one-time process.

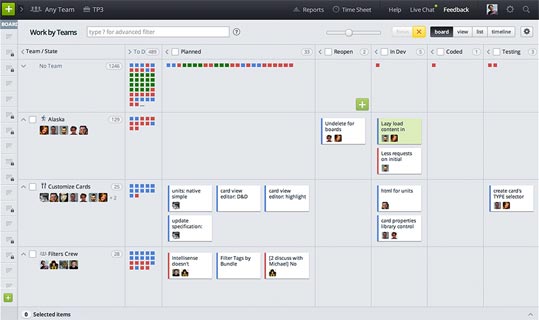

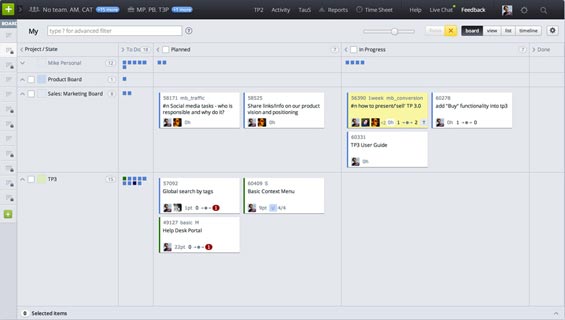

It sets various instructions on how multiple data sources intersect with each other based on some common information, which data record is preferred if duplicate data is found, etc. The mapping defines how data sources are to be connected with the data warehouse during data integration. In most cases, this movement of data is from the operational database to the data warehouse. Source to Target Mapping assists in three processes of the ETL pipeline:ĭata integration can be defined as the process of regularly moving data from one system to another. Unmapped or poorly mapped data might lead to incorrect or partial insights. Before any analysis can be performed on the data, it must be homogenized and mapped correctly. Source to Target mapping is an integral part of the data management process. Understanding the Need to Set Up Source to Target Mapping It will also take care of periodic reloads and refreshes to ensure that your destination data is always up-to-date. Hevo’s auto-mapping feature automatically creates and manages all the mappings required for data migration including creating tables with compatible and optimal data types in the destination schema. Hevo’s pre-built connectors streamline your Data Integration tasks and also allow you to scale horizontally, handling millions of records per minute with minimum latency. Hevo is fully managed and completely automates the process of not only loading data from your desired source but also enriching the data, managing the schema mappings, and transforming it into an analysis-ready form without having to write a single line of code. Hevo Data, a No-code Data Pipeline, empowers you to ETL your data from 100+ sources (40+ free sources) to Databases, Data Warehouses, BI tools, or any other destination of your choice in a completely hassle-free & automated manner. To understand more about ETL, visit here. The number of relationships between data sources.This was a fundamental example, and real-world situations can become much more complicated than this based on the following factors: This would require each attribute in the source to be mapped to each attribute in the target structure before the data transfer process begins. The above image shows how the data would be ideally stored in the data warehouse. This database will have to be denormalized so that no complex join operations have to be performed on the large datasets at the time of analysis. If this data has to be moved to a data warehouse, various complex operations will have to be performed. It can be observed that these tables are in the normalized form. The “movieid” and “actorid” attributes in the “casting” table are foreign keys to the “id” attribute in the “movie” table and the “id” attribute in the actor table, respectively. The above image shows three tables, two of which are a list of movies and actors and a third table defining a relationship between those two tables.

To understand how Source to Target Mapping works, an example is shown below considering a small database of various movies and actors.

It also sets various instructions on dealing with multiple data types, unknown members and default values, foreign key relationships, metadata, etc. It can be seen as guidelines for the ETL (Extract, Transform, Load) process that describes how two similar datasets intersect and how new, duplicate, and conflicting data is processed. Source to Target mapping can be defined as a set of instructions that define how the structure and content in a source system would be transferred and stored in the target system. However, this process becomes more complicated than it already is when there is data that has to be moved to a central data warehouse from various data sources, each having different schemas. Hence, there is a need for a mechanism that allows users to map their attributes in the source system to attributes in the target system. When moving data from one system to another, it’s almost impossible to have a situation where the source and the target system have the same schema. What is Source to Target Mapping in Data Warehouses?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed